Wading Into the Generative Music Debate

AI-generated music continues to be a hot topic, so it’s time to check in with where we are right now. From an ethical perspective, we have a few different classes of companies. There are those who knowingly trained on content without authorization—claiming fair use or some other declaration—and Suno is the poster child for this category. Then we have folks who were ethically trained from the beginning, like Beatoven.ai. And then you have companies that transitioned from the first to the second category, like Udio, although they are still on that journey with some lawsuits outstanding.

There’s been a lot of momentum against Suno particularly, especially with the ongoing lawsuits and the Say No To Suno campaign. Mikey Shulman, Suno’s CEO, continues to make statements that are, shall we say, provocative? He famously said that musicians don’t like the act of producing music. He keeps talking about how Suno is really about an experience centered around music and gaming. And he talked about democratizing it until a billion people are making music with Suno. It feels more about creating a social experience with generated music at scale across a social network than a platform for producing music at times. And I’m thinking about this because two YouTubers that I keep an eye on published AI-related content this month, and it’s worth taking a look.

The Core Issues

YouTube creators Rick Beato and Adam Neely both dedicated YouTube episodes to AI in February. It’s not like either has been silent, but these are an odd pair of bookends. A short, intense scree by Rick about how AI artists’ social media metrics are just not right, followed by an unusually long and (as usual) well-crafted narrative from Adam taking us through an intricate refutal of everything Suno stands for.

I’ll hand it to Adam Neely: he thoroughly explores the topic. But at over 90 minutes, it’s just a long listen, even at 1.25x speed. Which I confess to using, and it sucks, because the music isn’t resampled correctly, and so I couldn’t really hear all those beautiful Adam Neely bumpers and fills. Anyway, it’s a fabulous journey he takes you on—movie length and quality. But it has this one thought exercise that was compelling and worth recounting to put this all into perspective with respect to human creativity. He found this question in A Call for New Aesthetics and recast it for AI music:

If jazz didn’t exist, could you prompt Suno to create it?

Mind blown. Thanks, Adam.

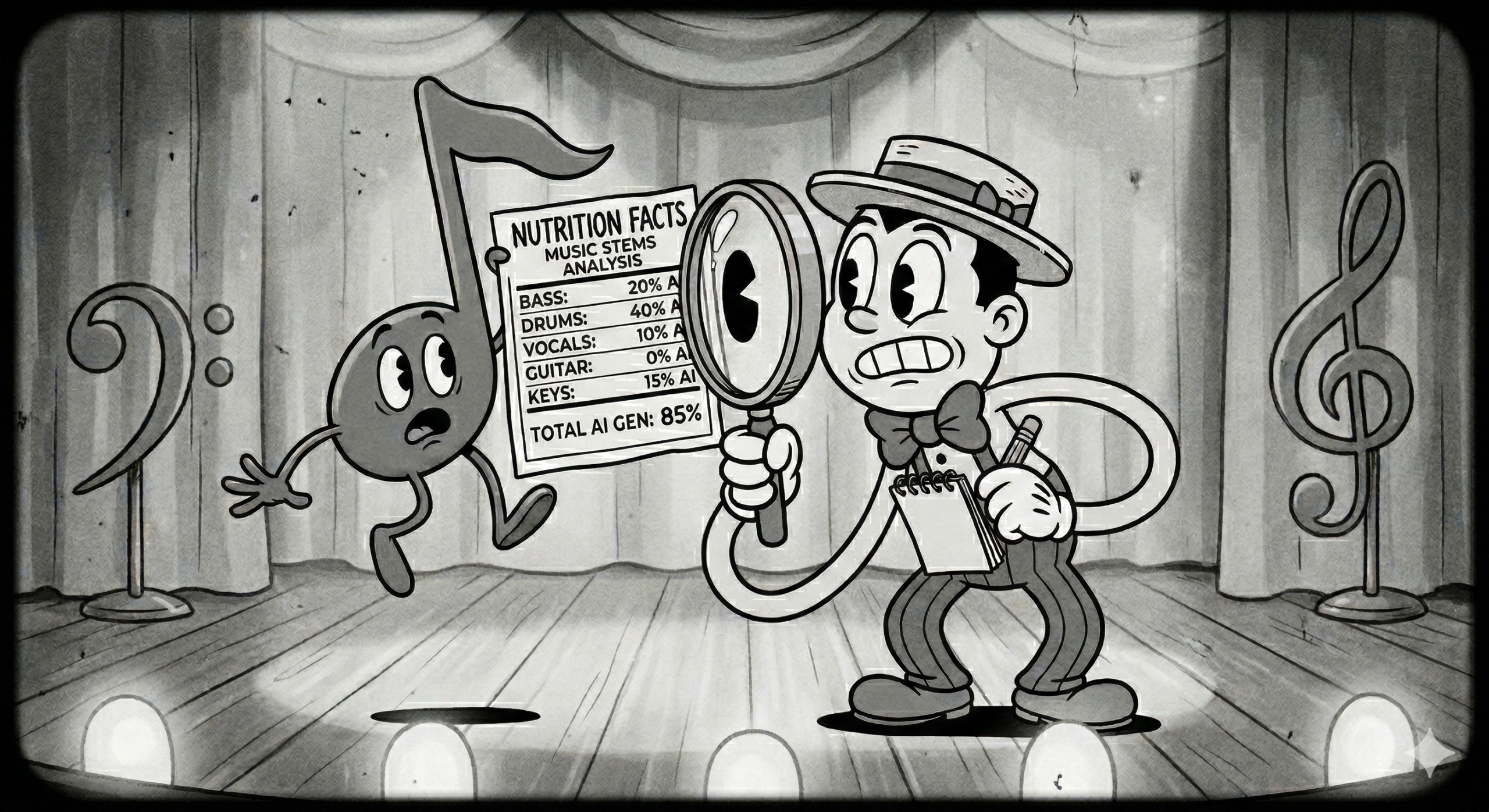

Rick’s video was a scree about AI artists, really. He spent most of the time comparing social media statistics to try to identify artists that are likely not human. Which was interesting, but it was punctuated with a ranting call to action specifically to see music labeled so we know what’s in it. He gives the analogy of a nutrition label. We use the same analogy with C2PA. In fact, C2PA manifests are based on ingredients. I’m sure Rick hasn’t heard of C2PA yet, but within it lies a solution to his problem.

The Human Learning Argument

One common argument in support of treating AI training as fair use is, “AI uses the same process humans use.” The human process is that you listen to a lot of music, you are influenced by it, and then you create something that sounds like what you listened to. However, this argument breaks down pretty quickly.

The first problem is scale. If you want to write a song in the style of your favorite pop star the way an AI model does, you’d take all the singles that artist has released and train yourself on them. That’s at least 40 or 50 songs, maybe 100. You’d have to learn them, transcribe them, memorize them, and practice them sufficiently to be able to play any part of any song in any order seamlessly. And you’d have to play it exactly right, with no mistakes. This is not something humans are capable of. It feels like the same thing, but the brain cannot compete with AI in terms of processing all that music and delivering perfection. Ironically, that is one of the things that makes human performances compelling. They are imperfect in wonderful ways. But, I digress. You would also have legal access to the songs you are learning. You would be streaming them on a service or purchasing them as digital assets. The artist would be rewarded for your use of their materials.

Some AI companies went through considerable effort to get this data. Anthropic, for example, bought millions of second-hand books, cut the spines off, and scanned them. Suno allegedly engaged in scraping YouTube for music. They deliberately circumvented YouTube’s stream protections and ripped the audio from videos to save it and access it later for training.

Now, I am not a lawyer, but neither of those is fair use, in my opinion. They are both attempts to game the system, and they hurt the artists they depend on for training content in the process.

The Real Goal

Suno says they want to democratize music. And there are ways in which they and others will help by making the production process available to people who are overwhelmed by the tools or couldn’t engage without Suno’s style of music production and social network or have disabilities or other barriers to making music. But I don’t think the goal is democratization for the sake of humankind. It’s democratization to achieve one billion users, a number Suno’s Shulman throws around, and one that would be absolutely fantastic for him, Suno’s shareholders, and the Suno music community, in that order. Also, you still need a computer, an internet connection, and money for a Suno subscription. It’s not like everyone can just use it. If they want to democratize it, they could take a lesson from Adobe, who used the India AI Impact Summit to announce they were giving free access to Firefly, Creative Cloud, and Acrobat for students up through college at over 15,000 schools in India.

My Take

The reality is that Suno and Udio are using a classic Silicon Valley regulatory hacking approach to scale fast. But they are not yet in the clear. Both still have outstanding lawsuits. And “regulatory hacking” sounds very hip, but I think a more appropriate term is “regulatory contravention.” They break the law to build scale and then negotiate their way to legitimacy. I can’t argue that it works, but I personally dislike the tactic and do not want to support companies that employ it. So I do support the “Say No To Suno” campaign, and I sincerely hope that Suno, Udio, and other companies of that ilk will find their way to truly respecting the artists whose material helps build their technology. Until then, I am personally going to avoid them as a rule and spend my time and attention on companies whose tactics are more ethically aligned with me. But I also am not here to condemn people who do use it or who work there. I’ll save my criticisms for some time in the future when I can have an open conversation about it with Mikey Shulman. Until then, let’s keep on making music, people, in whatever way we do it!

The India AI Impact Summit 2026

The other major thing we need to talk about for February was the India AI Impact Summit, which happened mid-month in New Delhi. It was a huge event—and a very important one.

In my professional circle, I saw a lot of people either talk about or actively participate in this conference, which was broken out into seven “chakras”—working groups focused on different themes. A lot happened, but in the “Safe and Trusted AI” chakra, there was significant content around C2PA, content provenance, and authenticity, with Prime Minister Narendra Modi and IT Minister Ashwini Vaishnaw specifically highlighting the threat of fake content. Modi notably called for “glass-box transparency in digital content,” meaning safety rules, mechanisms, and governance principles should be transparent and verifiable so that AI decision-making can be understood and trusted.

C2PA was extremely well positioned to provide solutions for glass-box transparency. Both Adobe and Microsoft had sessions on how C2PA metadata and manifests form a “nutrition label” to combat deepfakes. C2PA was also well-aligned with standards bodies like ITU and ISO, framing content authenticity as a global standard rather than just a corporate priority.

Localization is also crucial because India, particularly rural India, has over 22 languages. Standards meet reality in India, and standards have to adjust.

The February Trend: AI Detection

For me, the biggest trend in February is the rise of AI detection. Sony made a major announcement on detection, with a system that can attribute specific percentages that a source work contributes to the AI-generated output. It uses some really advanced techniques, including one called unlearning, in which they force the generative model to unlearn a song and then determine the impact of unlearning on the original sources. Sony has some advantages in that they own a lot of the source material, but there are lots of other companies getting into the space, like ContentLens.ai, SoundPatrol, and humanstandard,

C2PA works differently than these techniques because it is attached to the file itself, and if properly implemented, the provenance chain grows each time a file is altered. C2PA provides not just an understanding of what an asset is and who created it, but also the provenance and authenticity of the ingredients. It has a structure for all of it.

You’re going to start seeing content tags on music files this year. Trust me on that. Happy February.

Disclosure: I am an advisor and consultant with ContentLens.

Leave a Reply